Updated: June 13, 2020

Recently, I read a bunch of articles that mentioned a change in how Google plans on tweaking site ranks in Google Search from next year. Today, the formula incorporates a number of user interactiveness elements, called Core Web Vitals, soon to be joined by page performance. I thought, now there's a bad idea.

Your first instinct would be - yo, old dinosaur - and it was mine, too, so I decided to actually check what gives. Google has a number of services available, like Google Search Console (the new Webmaster Tools), PageSpeed Insights, and a few others, which can help you check how your website is performing. So I did a check of Dedoimedo, and then scribbled this article. To wit.

Why page speed is meaningless

I've already written about this many many years ago, before the whole mobile hype, and the arguments still stand. But even more than that, page speed is not an indicator of the user experience in any way. It is, at best, an indicator of the software stack that serves the page. It is also an indicator of technical wealth, which I will explain in a few moments.

First, some simple mathematics, Core Web Vitals aside. A page with 100 lines of text and 10 images is smaller/shorter than one with 1,000 lines of text and 100 images. With no other consideration whatsoever, a page with more data should take longer to load. Does this tell us anything about the content? Nope.

So, excluding all other parameters, less content = more speed. Coincidentally - or not - this fits well into the dumbification patterns of the modern Web, which has become a dominant force around 2014 or so, roughly the same time when the mobile Internet (smartphones in a nutshell) became so prolific.

This translates into short attention span and cheap consumerism. Writing content in as few characters as possible instead of trying to deliver a message that is accurate and useful as it can be. Video and images instead of text. Of course, this is exactly what you need to keep the low-IQ masses entertained, because they cannot be bothered with long, complicated topics. After all, stupid people are good for business. They are more likely to spend money on frivolous things, more easily coerced or swayed into trends, more easily controlled. Hello, profits!

Second, the page loading tells us nothing of the nature of the page. A page where you need to fill in a form is completely different from one that summarizes the history of Norman conquests. With the former, responsiveness as opposed to pure speed could be important. With the latter, it's a meaningless value. Now, this isn't a trivial topic. There's deep, complex science behind this, and even I have done some serious research on this subject, and presented my work at various conferences in the past, but let's put that aside for now.

Practical example: any typical Linux review that I publish here. They are quite long, they usually have about 40K characters of text (so that would be around 2,500-3,000 words), and contain about 30-40 images on a single page. This content takes time loading - not much but some.

However ... the articles always start with two paragraphs of text, which probably take 15-20 seconds to read, if not longer. By the time the user has finished reading, all the assets had loaded ten times over. Speed has zero bearing on the user experience.

But ... if you look at the page from a so-called "SEO" perspective, then it's "too long" for the supposed pundits. Without going into details or giving spotlight to software and ideas I resent, I've seen various "SEO" tools that recommend articles be no longer than 500-600 words or similar. On its own, this is a meaningless number, but then, this is what happens when you try to put pseudo-science around something that machines cannot really quantify.

And that's quality.

One thing that is never mentioned in any "SEO" stuff is quality. Indeed, this is something that no machine will ever be able to check. Quality is like trust - it takes time finding it, building it, nourishing it. Quality also has a long temporal function - you wouldn't necessarily decide something is "quality content" just by reading one or two sentences of a blog (well you could, if you are proper skilled in the domain). Generally, you would need multiple, repeated experiences to determine the level of consistency and accuracy in published material.

Going back to speed. Let's play along. If you want pages to load as quickly as possible, you want your content to be as short as possible, which probably means cutting down on quality. Then, you could potentially split content into multiple pages, but then you force readers to click on numbers or arrows time and time again. I'm not sure what the general sentiment is, but I find this pretty tedious, and try to refrain from doing it as much as I can (with only a few small exceptions).

Indeed, the concept of web pages (multiple pages serving "pages" of the same content) is a result of a pointless chase after speed, where speed isn't needed. Because if it's images we're talking, image galleries are by definition a set of individual (and often independent) frames. If it's text, then we're talking long content, probably justifiably so, which means those who intend to read it actually have the patience for the whole thing to begin with. Thus, the speed consideration is actually a Catch-22 thing here.

All right, let's score some pages

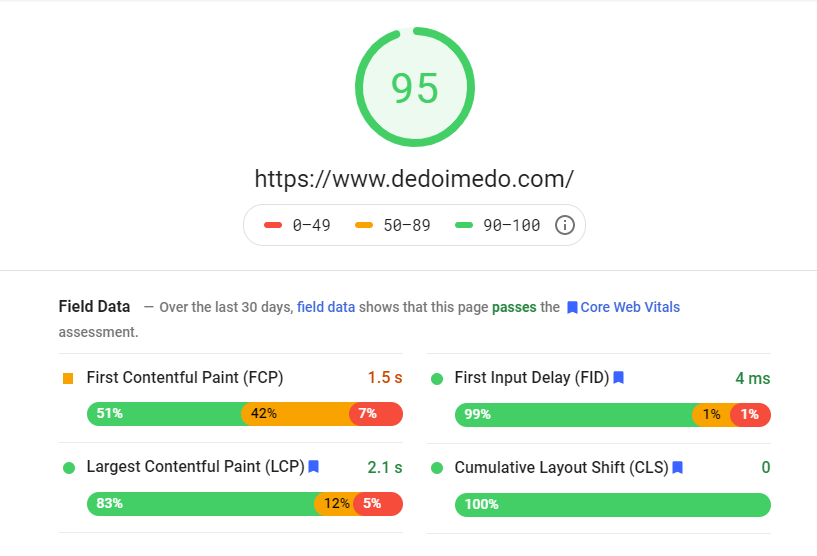

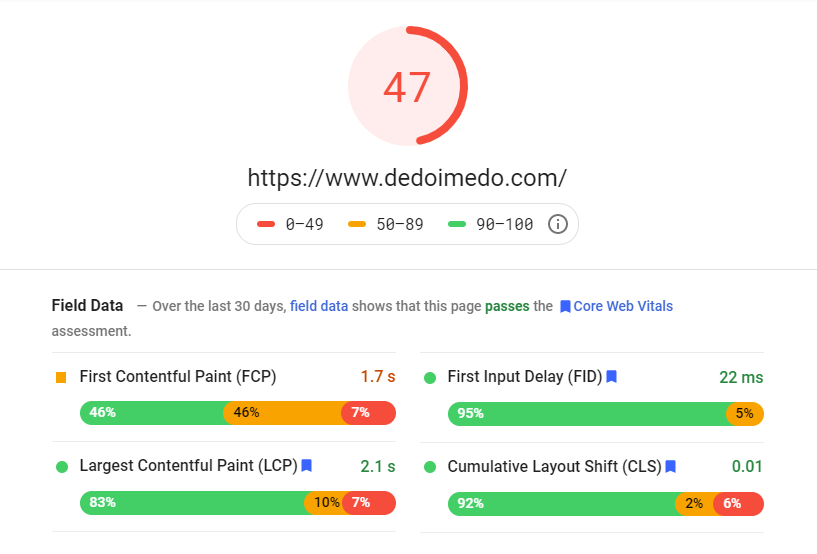

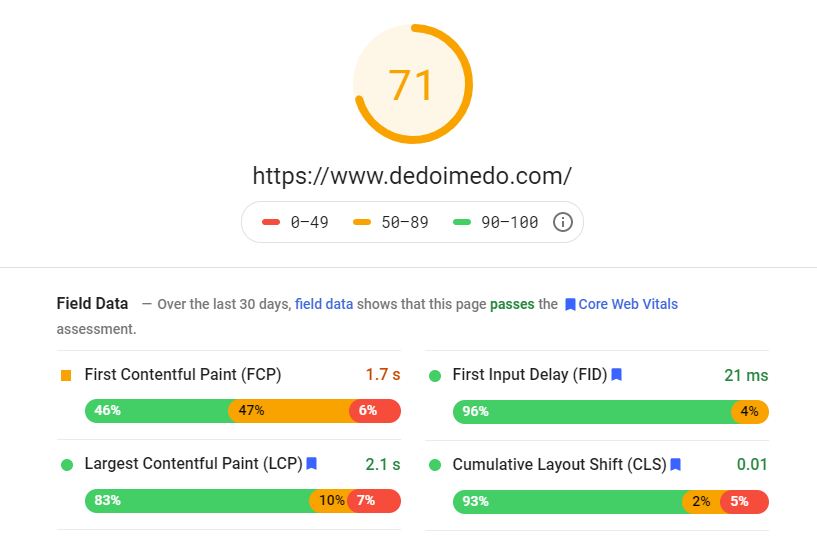

But like I said in the beginning - we need actual numbers. So I did some speed tests for dedoimedo.com, and let me show you the results. Please note that you actually get two separate set of results - the way the pages are shown on the desktop, and the way they are shown on the phone (mobile). The score is between 0-100, and of course, the higher the better.

Looking at the data, desktop performance is good, mobile - not so. EXACTLY THE SAME content. The differences between the two are quite small. From what I can see, there's only 200 ms difference in the FCP parameter.

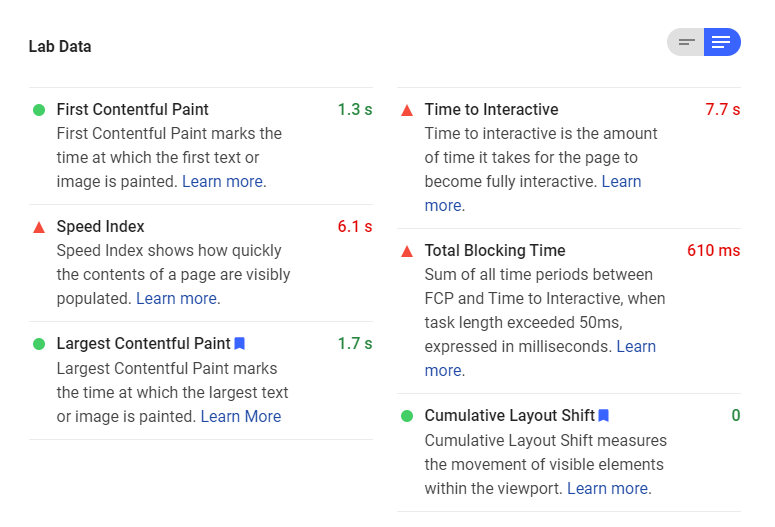

Now, Lab Data gives you more - and here we get some additional information. But again, without taking into context the NATURE of the page, the numbers don't mean much. For example, Time to Interactive, there's no real interactiveness on my pages - text and images. Read, look. A portion of this time - how quickly the contents of the page were visibly populated, is indicated by Speed Index. This goes back to my argument on how the speed is meaningless. The index page on Dedoimedo, for instance, shows the news headings for published content in the past few months. Obviously, if there was less, it would take less to load. But that's not the point.

The numbers may look alarming, but believe me, Dedoimedo gets a higher score than most other websites out there. Try it, punch in a number of popular tech publications, and see for yourself.

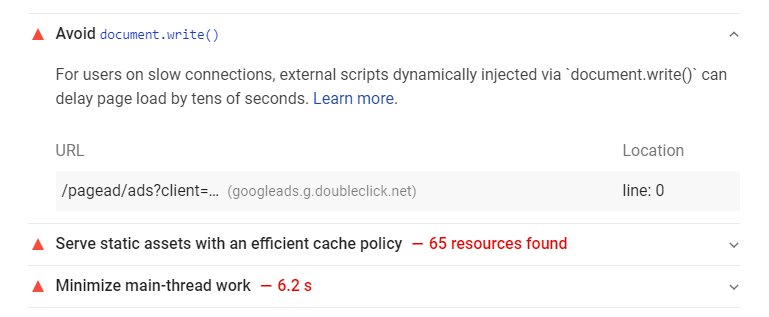

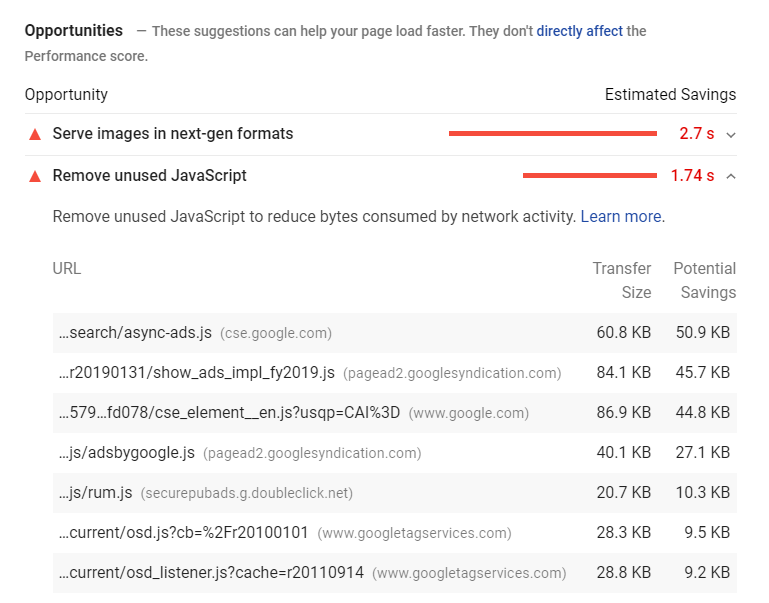

Now, to understand some more what gives, I decided to expand the results, and look at the different opportunities and diagnostics available in the report - basically recommendations that the algorithms behind this report suggest. Please note that these don't affect the score - but are telling in their own right.

Diagnostics wise - it appears that some third-party code blocked the loading of the main thread (of what) for about 1 whole second. The breakdown shows that the primary culprit is actually Google Ads code that I use on my various pages. Similarly, the document.write() is flagged as another issue for those on slow connections, and again, this belongs to third-party code.

Opportunities - some interesting stuff there. Images. The suggestion is to use "next-gen" formats instead of the old JPG and PNG. Supposedly, this would save about 2.7 seconds of loading time, but then 2.7 seconds is less than it takes a person to read one line of text on any one page on my site. Practically, it makes no difference. But this goes back to my use of large sets of images in my articles. The very thing that helps people get the best value from my guides and tutorials. Now, this doesn't directly affect the score (not sure what this means), but then algorithms cannot really determine whether there's any value behind what I offer my users. The images themselves could be useless.

What I found curious again - unused JavaScript - all of it third-party content related to Google stuff that I'm using on Dedoimedo. Now, I pride myself on being precise and meticulous. I've always tried to implement best solutions so my users - the readers, the people who matter - can have the best experience.

This is one of the reason why Dedoimedo has ZERO organic JavaScript - and the reason why it should be brisk. No cookies, no comments, nothing. The only third-party code is some basic analytics, search, some ads to try to earn a few bobs here and there, and the cookie overlay applet, which is required by GDPR and CCPA. But looking at the results above, the best thing I could do is to scrap third-party scripts altogether.

Now, I've not invented the code for any of the above. I'm using the ads code exactly as prescribed by Google. I'm using the cookie applet code exactly as prescribed by Civic. If there are opportunities to improve the loading, perhaps async functions and whatnot, then I am powerless in implementing them. I have no control over how the third-party companies create their code. My only control is in choosing to use them - and perhaps that choice also needs revisiting.

Then, the results also seem to differ quite some from one test to another. Here's the mobile result from just a couple of days after the first one was collected - I don't mind, but then, how am I to take these results into account, if they change so much without any input from my end?

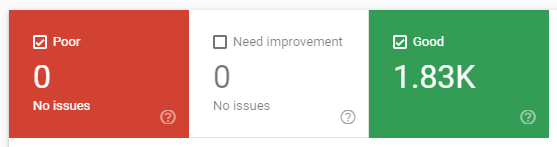

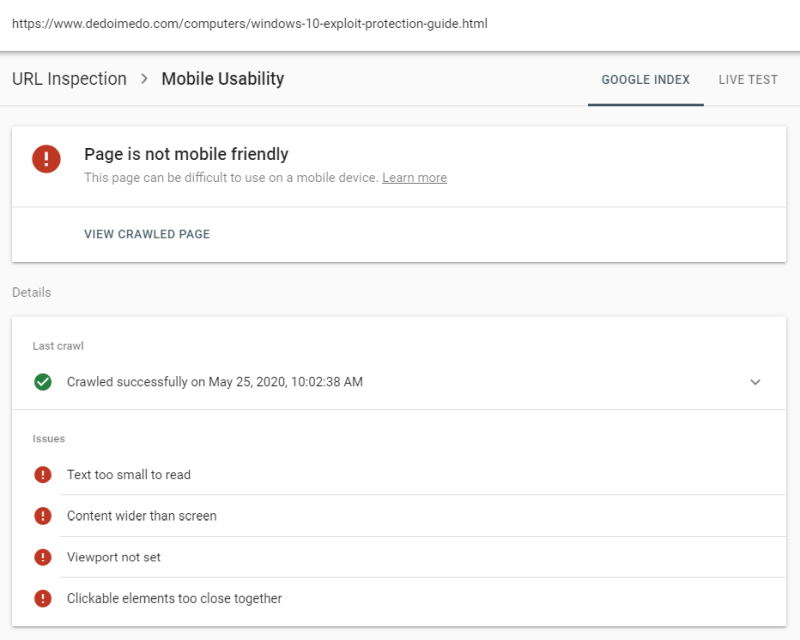

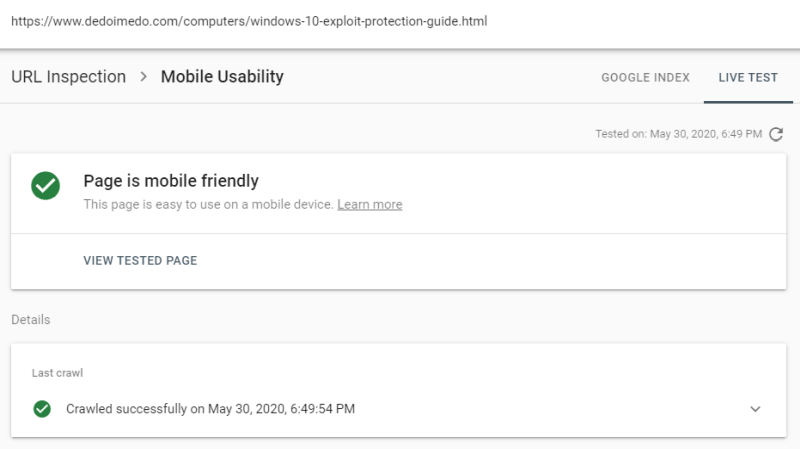

The number seems to be in contrast to what Google Search Console report - all my pages report Good page performance - but the Field Data text report seems to agree. On a side note, out of all the indexed pages, only two seem to have mobile usabilities issues, and in both cases, these seem to be false positives, as the live test reports all green (plus they actually render correctly on the phone). For instance, one of the only two pages flagged here, the Windows 10 exploit guide:

Speaking of friendly, pale gray font on gray background isn't really friendly. Far from it.

Conclusion

And there you get it, my findings. Numbers that don't really say much on their own - without context, you don't really know what gives. Numbers that differ significantly between desktop and mobile, and numbers that differ from one sample to another. Numbers that don't agree with a different set of tools that offers similar functionality. Numbers that seem to be influenced primarily by third-party scripts, most of them created by Google. Some data that also includes false positives.

This is a short, simple test - and it shows a lot of variation and ambiguity on how performance slash speed is interpreted. Again, we know nothing of the content behind numbers, nothing of the actual quality. A page could have a perfect score, but then most of the modern Internet is a cesspit. That is something that needs addressing first. But hey, I'm just a lowly lowly cook.

I'm not sure anyone cares about what I wrote here - 'tis one man's opinion. But I believe that adding speed to the formula will only make things worse. The things are already bad with people chasing the mythical "SEO" unicorn. Speed will only lead to even more diluted "fast" content, even more fluff without substance designed to please the machine. Hint: I've never EVER tried any "SEO" recommendation, and the popularity of my site has grown, shrank, grown again (with zero intervention on my end), shrank and grown to the beat of financial considerations that are completely out of my control. Instead, I focus on quality and fun. But I also know I'm a dying breed of old school techies, and the future belongs to touch-happy idiots. Which is fine, because it's their future. I shall salute them from a decadent retirement on a nice sunny island somewhere, enjoying the dividend from companies that make such ample profits from the low-IQ crowds.

Cheers.