Updated: March 17, 2017

Wait. Before you say clickbait, let's focus on the definition of cancer. It is a group of diseases involving abnormal cell growth with the potential to invade or spread to other parts of the body. Now, let's replace diseases and cell growth with words like software and industry.

In the past 15 years or so, ever since the first software bubble was burst and developers realized they needed a new way of making easy money, there's been an alarming and unchecked trend in the growth of software languages and development disciplines, all designed to support and sustain themselves. A living organism with unprecedented spread. Cancer.

How this article was born

While testing Fedora 25, I had my first taste of Wayland, and it was when I visited the official page and read the manifesto that it dawned on me. Here we had a new framework, created to make it easier for those developing it to develop it. What. Previously, a very much similar platter of emotions erupted in my being while fiddling with systemd and trying to solve problems that were created by, guess, systemd, a complicated pile of code with no other purpose other than to be a complicated pile of code that no one can debug, but it is more enjoyable for those who like writing Python to write Python or some similar nonsense.

Firefox, the same thing. We have a browser that is doing everything to make itself into a different browser. And now, it is changing its very fiber, the extensions mechanism, so that we get a new framework of extensions that is completely incompatible with the old one, plus it's going to alienate all the existing users. Oh yes, users. Supposedly the target audience who should benefit from all these software games. No, not anymore.

Windows 10, Linux Mint 18.1 Serena, more triggers for my battered, weary soul. New stuff that is coming out to replace old stuff, except it's crippled, it takes a bunch of years to reach the level of maturity of the old software, and then it's axed again, a new crippled version is released, and the cycle begins again. The list is endless.

Somewhere in the past 15 years, it all went wrong.

Why this is horrible

The purpose of technology, software included, is to make lives easier, more productive, safer, more enjoyable. A means to an end, not the end. Anything we use is there to help us satisfy primal needs.

For instance, a browser is a portal unto knowledge and entertainment. This piece of software is designed to get us to webpages quickly and efficiently, display them correctly, and also prevent unwarranted installation of new software on our systems. That's all. Nothing more. But no. If you look across the board, this is not what you get. Sure, there's a strong commercial element, of course, but most of it stems from the fact that browsers require strong development and would-be new features that no one truly wants.

This is a classic case of an organism that needs to feed so it can get better so it can feed some more. But if you think carefully, browsers do not need half the features they have, and they have been added and developed only because people who write software want to make sure they have a job security and extra control.

Wayland, same story. And the argument for its existence is meaningless. On the server side, 99% of the time you don't need the graphical environment at all, so this has nothing to do with the vast industry out there. In the home setup, the old framework was doing well, and from the user perspective, things were just fine. Normal people don't know or care what powers their box. And that's a sign of an excellent product. It's transparent.

Now though, we have Wayland - and Mir - and for years, we will be in a state of beta limbo until developers finish playing, if ever, after which they will find another excuse to create another framework and start again, treating the entire world as one big sandbox for their own amusement and profit. Or maybe just amusement. After all, when writing code is your passion, your job and your goal, you don't ever really finish, do you.

The same can be said of the init systems in Linux, like systemd. In a nutshell, the system should start quickly and get into a working session. We had this in 2010 or so, with boot times down to mere 10 seconds using init. No flaws, no bugs. Even in the commercial sphere, working with init, I do not recall any major problems.

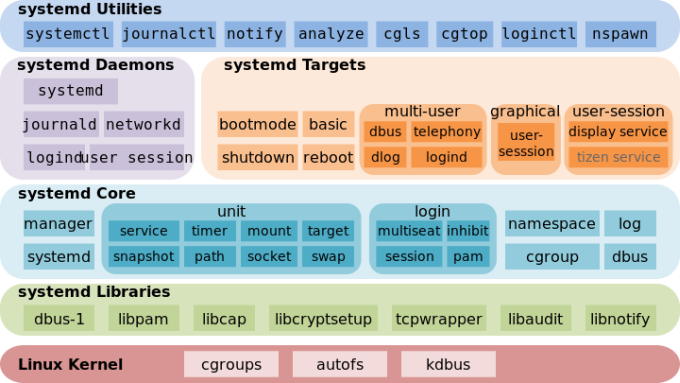

Then, suddenly, we have this new binary diarrhea with a hundred million modules, and for the past five years, this unstable, half-baked, undebuggable nonsense is the backbone of most Linux distros. The invasive and pervasive nature of the systemd framework has also affected the stability of the user space, the very thing it should never have touched, and pretty much all problems with the quality of the Linux desktop nicely coincide with the introduction of systemd. The development continues, of course, and for no good reason than trying to reach the level of stability, maturity and functionality that we had half a decade ago. Someone landed themselves a lot of monthly pay checks by writing complex code to solve a problem that did not exist.

More components than the kernel itself, and then some.

And this trend is prevalent across the industry. All the new software frameworks are horribly complex. All the orchestration mechanisms are a garbage fest of functions and buzzwords, without any focus on the end mission. You start with something like containers, but they are so complex, they need a governor. So you end up with an abstraction mechanism, but the network layer sucks, or it is immature. So someone else develops a software-based virtual network framework. Then, three more companies create their own orchestration tools, and there's too much fragmentation. This calls for a new framework that will manage all these others. All because the initial implementation is lousy. This is a classic case of parasitic behavior. In fact, modern software development can be summed thusly:

Let's solve a problem that does not exist.

Buzzwords & Bullshit

Like Sense & Sensibility, only better. Of course, this whole dot-com - or should I say dot-io - software mania is so profitable that everyone jumped the bandwagon, it's a bullet train now traveling at 1,450 km/h, and everyone is clinging dearly and fiercely, lest their profits perish. This has also led to a tide of morons flocking to the software world, and now they have special words and phrases to impress other morons into buying into this digital religion.

Agile

The most special word used by morons. Rather than releasing software once a year or so, companies now have a rapid release cycle, and they supposedly adhere to this agile model, to distinguish themselves from the would-be legacy dinosaurs. This leads to a quick increment in versions numbers and much reduced quality.

But that's the thing - having lots of versions creates an impression of ACTIVITY, and this is exactly like the Ministry for Administrative Affairs in Yes, Minister, Season 2, Episode 1, The Compassionate Society, a hospital with no patients but tons of administrative staff. Time index 17 minutes 54 seconds. Pure gold:

Of course, the agile model does not really work for 90% of companies, because they think it's about speed. They forget the fine details like assigning their best people to work on these products, the need for self-discipline, a high level of autonomy, and of course, being able to create software products with reasonable milestones. But as long as they are agile, they are untouchable. Because they are agile. Agile, you hear!

DevOps

Someone thought it would be good to reign in the code monkeys and stop them from making the operations unbearable. Basically, version control and configuration management, with system administrators in charge of the servers. Except they spend all of their time writing code and debugging it rather than administering servers. A beautiful paradox.

CD/CI

A synonym for Agile + DevOps. If you hear someone say this, ask them if they use TDD and then tell them you prefer OpenTTD and laugh (or unplug one of their seven monitors).

JSON

Glorified CSV files because someone did not like XML.

API

The favorite buzzword used by management to describe something they have no idea what it does and how it works. But it supposed to mean - you are an idiot, you cannot code, here, use this line here, and when it runs, it will retrieve something from a server somewhere. In other words, quite often, HTML commands encapsulated in bullshit.

Orchestration

Someone wrote shitty code, so someone wrote more code to manage shitty code in a less shitty way. An excellent way of making huge money. The most profitable way of being perceived as savvy, especially in the cloud space.

Internet of Things (IoT)

This is the most genius invention EVAR. Basically, you embed a tiny Web server into everything (most likely Linux), and then you use "API" to connect to it, so you can control things digitally. The wisdom of this concept is that it will feed the entire post-2000 bubble for another 20-30 years at least, so we need not face another revolution just yet. The IoT reality will create jobs for roughly a hundred million developers in the next few decades and bring in shitloads of cash to those who do it right.

Software developers must not touch product

There's your root of the problem. When you get people with absolute zero social and marketing skills tell the world what the end product should be like. When you let software developers define the final state, your world looks like one big debugger session. This is so stupid and pointless that watching reality TV feels like inventing calculus.

This is why you have a hundred pointless programming languages out there, which serve no higher purpose than to make whoever is writing a piece of software somewhere feel more comfortable in their chair. The user is a nuisance, and usage patterns are invented to justify software decisions.

A great example is the tabs-on-top shit. This goes against basic human thinking, reading logic, and of course, UI hierarchy, but when you get Star Wars fans dictating how things should look, this is what you end up with.

If anything, software products - good software products - should be designed by people who have no formal compute science education, perhaps even no tech skills. You want them to focus on human needs and how people interact with things. Not through the eyes of a cook, who sees buttons and fields and forms as ingredients to their next pay check, but through the eyes of a human who seeks to solve a problem and satisfy a primal need.

Instead, more and more companies are losing control of their products by letting their developers go public. The reason is, Google and Microsoft and Facebook were created by nerds, so everyone thinks that if they put someone high on the spectrum in front of a camera or perhaps let them design software that they will end up being this multi-billion-dollar conglomerate. Except that's not how it works.

Essentially, people who write code are just glorified digital welders. They need to connect bits and pieces of text written in an arcane way so that they do something useful and noble. When you let software methodology become your modus operandi, bad things happen. Let's go back to Firefox, and then we shall talk some more about Linux, both of which are dear to me.

Firefox is a browser. A portal of information and entertainment. Sometime in 2011, Mozilla decided to change Firefox to be more like Chrome. Guess what? In 2017, Firefox has about 50% less market share than it did in 2011. Because why go for a clone when you can have the original?

Furthermore, in these six years, Mozilla introduced software-driven changes, like tabs-on-top crap (each tab is a separate process supposedly, who gives a shit), rapid release cycles, several pointless features that serve no human need, and now they are also going to change the extensions mechanism for a new one, again because the OLD ONE IS TOO COMPLEX AND DIFFICULT TO CODE OR SOMETHING.

Image courtesy, memegenerator.net, DreamWorks SKG.

Rather than forcing their special snowflakes to work hard and create a SEAMLESS product, they will do exactly the opposite. They will destroy the old framework, put in place a new and undefined one, 99% of all extensions will die, and even more people will abandon the browser, because add-ons are the chief reason why people stick with Firefox. This is so obvious, but not when you pleasure yourself to GOLANG.

What should have been done - whip the developers into submission, force them to create a backward-compatible framework that supports everything, and make backend changes that do not affect the user in any way. That's how product-driven development is done. Instead, you have developers talking about WebExtensions - notice the stupid naming convention - and API and hashtag, and we have a new lowercase logo, we're cool and modern. Did you read about the new Mozilla logo?

Look at reasons for the change - a nod to URL language. What? If I had asked my granddad about URL language, he would haven given me a brick and sent me to play in the minefield, but not before hitting me on the head with it for talking nonsense.

Back to WebExtensions, you have developers talking crap on how this will be done. No. I don't want to read technobabble. I don't care. What I do care is that when I launch my browser, my PRIMAL NEEDS are satisfied. If a code change disrupts my usage model, it's shitty code. I don't care if someone needs to spend 100 years in a basement fixing this, it's not my problem. P.S. If you're wondering, what will happen is, Firefox will lose even more market share, because once extensions stop working, there will be even less incentive for loyal users to stay with a browser that is trying to ape Chrome.

Linux. Ah well. What is there to say about Linux. Everything. Same functionality, 10 different programs. 10 different forks and spoons, created so someone can write code when they feel like it. New audio and video frameworks that are bloated and complex and self-serving. More bugs, more crashes, reduced performance, reduced battery life. That's the end result of software-driven development. Because for developers, this is a lovely and colorful playground. This is what they breathe and live. For end users, it's a nightmare designed by amateurs.

PulseAudio? Is my sound any better? No. Shit. Wayland? Are my graphics any better? No. Shit. Systemd? Is my system more stable and/or boots faster? No. Shit. And the list goes on and on and on and on.

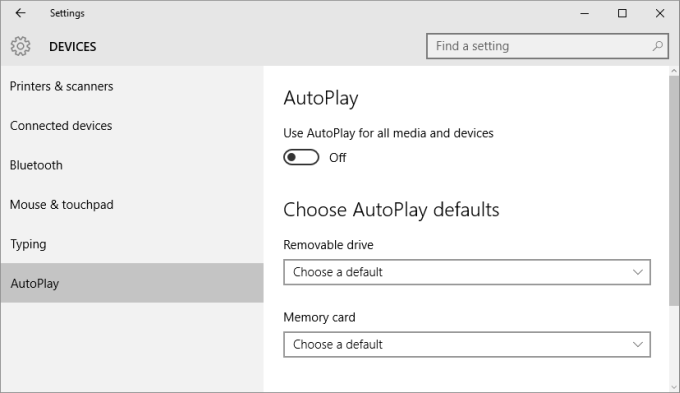

Windows 10. Half-baked releases. I didn't pay money for a half-baked crap you will complete in 2 years. I don't need a system settings menu that will be equivalent to the Control Panel in 6 months when I had the perfectly sane and functional Control Panel in Windows 7/8 years ago.

Luckily, it's so easy to placate cretins - just give them shiny things that make it easier to watch videos, both the SFW and NSFW flavors, and swipable screens full of big icons the likes of which they use in the film Idiocracy. That's why this software-driven nonsense can survive.

Basic needs

In the end, we need to go to basic needs. And then, realize how everything is very simple. Why did smartphones succeed? Because they allowed a common person to do the same things they did on the PC cheaper and faster. Not high-end stuff that we geeks love, but the ordinary mail/pr0n/music activities of the average user. Why did tablets not succeed? Because they tried to fulfill the roles of a laptop and smartphone at the same time and never quite nailed it.

For that matter, netbooks fall into the same category, as I've already explained in my article on the future of computing, and where I also mentioned the high-end PC going strong. Which they are, once again, because people need power. In that same article, I also talked about Firefox not dying or declining or whatever - this was just before they completely changed their focus and approach and ruined it. Basic needs.

And when you look at the world of technology through these lenses, you realize that software is irrelevant. Linux desktop is meaningless but Android is the king of the mobile. Microsoft rules the desktop and yet it struggles on the phone. So it's not software. It's the usage model. It's what sort of basic needs they can satisfy.

So if you ever wonder why software developers should not be allowed to do anything more than just write code, look at successful products and try to figure out what the common denominator is in terms of underlying code. There isn't any. Software is entirely replaceable, and must be completely transparent.

That's your one measurement of success of good code. Take any product, say a Linux desktop. Then take one from a year ago. Can you still do the same things and workloads, or do you need to ask yourselves things like which distro, version, kernel, environment, library, or such? No, since you cannot decouple code from the usage model, you know it's pointless and it needs to be deleted. This is true for pretty much any major product and framework that's come out in Linux in the past five years.

It's not about the age of the software and how suitable it is to the modern era, either. Most of the critical stuff runs on operating systems designed decades ago still being written in programming languages from the 70s. It's just the whole IoT nonsense where all the fuss is. The useless stuff really.

It's not about making it easier for developers - because you may naively think they will then have more time to fix user problems. No. Because if their code is problematic, they can just not do it in the first place and save all the time there is. Once they 'nail' it with one half-polished product, they will get bored and start working on another beta project. A living organism needs to sustain itself and grow, remember? And when the growth is uncontrolled, well.

Conclusion

Kids fresh out of university - or college - may be impressed by this brave new world of chaos and programming languages, but at the end of the day, it's about the user. If you go to a carpenter and ask for a piece of furniture, they should build it for you. The analogy for software development is, they will convince you that a bed is better than a chair, and how it is easier for the code people to build and support a chair. That does not fly.

Every day, I spend hours sitting in front of a computer, doing all sorts of things. A non-insignificant portion of my precious time is spent fretting, wondering when my browser, office suite, desktop environment, or even the operating system itself will suddenly stop working, because some software developer decided they are bored. I don't know if people in the olden age felt this way with their trains, radios, coaches, or whatever, but I doubt it. This Year 2000 kindergarten bullshit needs to stop. Once software development fades into the quiet background it deserves, and companies re-focus on the user, we will see an upsurge in creativity, quality, and fun.

When I go to a restaurant, I don't want to know what the staff is doing with my food. I'm paying for the service and ignorance. And I expect the same from software. I'm paying, I'm the boss. It's time the software industry started serving its boss, the user.

Cheers.