Updated: September 22, 2017

Recently, I've come across a bunch of references, mentions and rumors that several companies, names like Mozilla, Google, and maybe some others, are interested in introducing new, novels mechanisms to combat the phenomena of misinformation and fake news. Then, I've read some more on the Vivaldi browser and what their CEO had to say about Google, and I decided to write an article on modern censorship and how it comes to bear in the digital age dominated by a number of data companies.

Let's begin with a simple statement - mine. Censorship is horrible. It's a short and slippery slope from benevolent intentions to fascism. People do not need to be sheltered from information. If they lack the ability to exercise judgment, then they should also not be entrusted with things like cars, guns or children. Morality and law are two completely separate things. Finally, the big question, who gets to be in charge and decides what is good and what is wrong? Onwards.

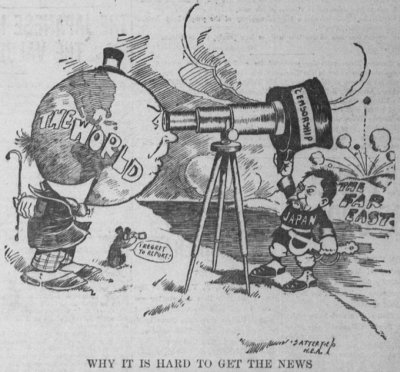

Oh, ze irony circa 1904.

News

Technically, news are pieces of information you didn't know before someone told you. In the old world, this was the domain of large broadcast companies and newspapers, but the Internet, and more recently, the social media, have shattered these boundaries, making everyone an almost equal voice in the vomit drum of noise and stupidity.

Technically, a vote-capable adult should be able to exercise some capacity for reason and critical thinking. If someone provides you with a would-be fact, you should check that it is correct. For instance, compare political news about a specific topic by reading American, French and Russian newspapers, to get a more balanced view of what's happening. Alas, too many people can barely read, let alone act outside their narrow, narrow-minded sphere of ignorance.

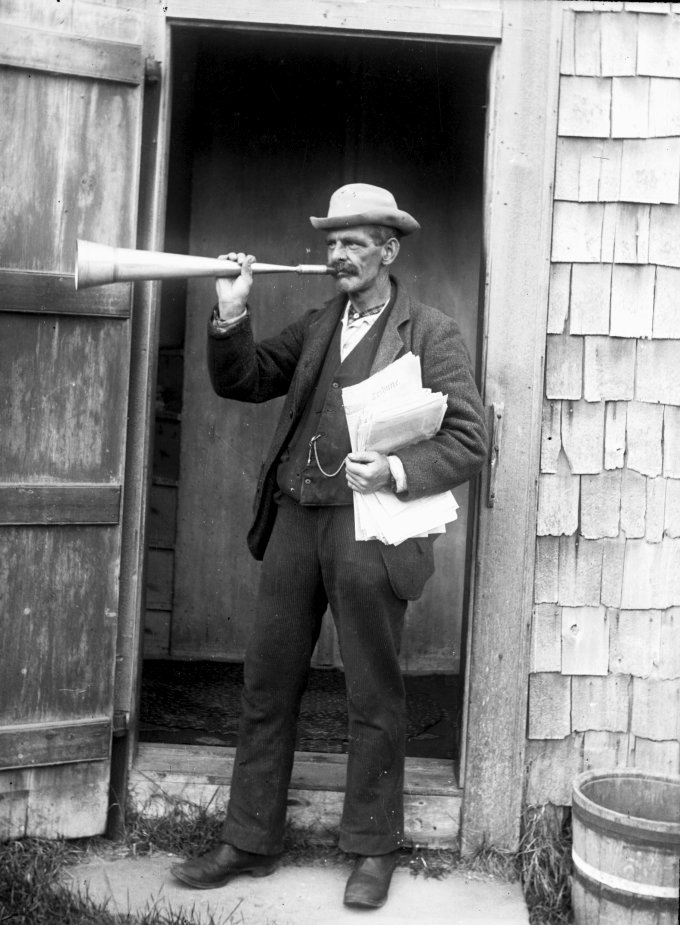

Note: Image taken from Flickr, no known licensing restrictions.

And so we get to the possibility that people may be exposed to things that may not actually be correct in some context. To make it spicier, data companies seem to have decided they should dabble in being the arbiters of truth and morality as a replacement for the aforementioned critical thinking and reason that seem to be lacking in about 99% of the population. Welcome to the future where the CORPORATION does all your thinking for you.

Fake news

This is a rather interesting and hot one. A lot of energy seems to be focused on initiatives designed to stop, curb or eliminate fake news. While the mission may be noble - arguably, to what end - the very definition of the problem is problematic. To begin with, what classifies as fake news?

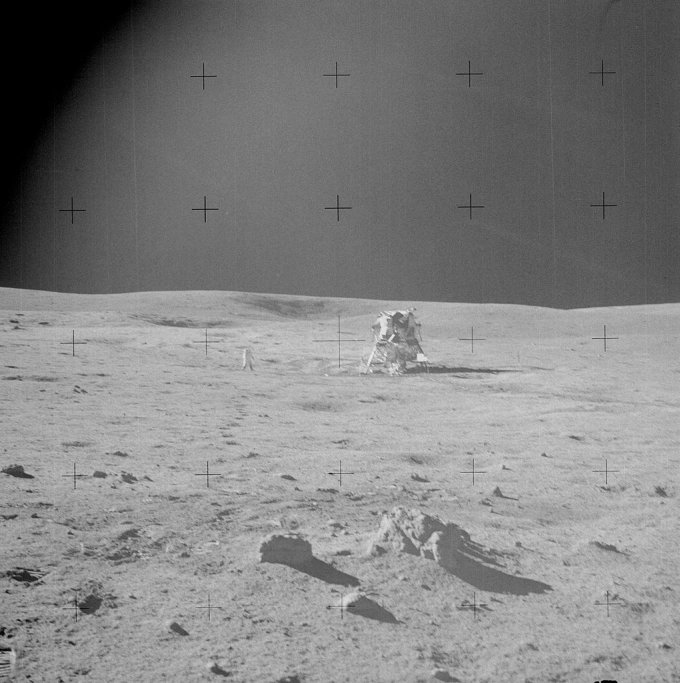

Let's say someone writes: "aliens landed on the moon" as an article. Factually, this is not correct (almost), so it may be considered fake. But then, what if the author actually intended to deliver a tongue-in-cheek, satirical or allegoric message, which requires the very premise of falsehood to be effective. In that case, while technically fake, it can still be morally correct in the context of the message. And since even humans often struggle with different facets of humor, be they intellectual, cultural or linguistic, the AI stands no chance. Turing FTW! Yet, we face a choice of decision.

If you introduce truth filters, you will deny smart people so much LULZ debating moon landings and flat earth online.

Which brings us to opinion versus fact. Let's say someone opines that a product X is shit. Does this warrant any sort of attention from the "truth" algorithms? Factually, no, the product is not made of feces (maybe). But it could be a very valid opinion. And then, it becomes complicated, because opinions cannot really be quantified or scaled. They are inherently personal and therefore always valid. So we steer from information into human dynamics.

Should the word shit be considered as a valid qualifier? It might be rude, but it might also be the best choice to describe the particular emotion or impression. Should the opinions of certain people be held in higher regard than others? And on what subjects really?

Now it becomes ever more complicated, because the Internet is already watered down to a low common denominator of political correctness, and few people dare express opinion. You can see this in product reviews around the Web. Mellow nonsense that does not really tell you anything. Lawyer like language that techies try to use and fail. People are afraid to take a stance, because they fear being judged. On its own, that's a problem, but it also indicates a wider issue with how information is handled today.

Information flattening & popularity

Another problem we have is that everyone has more or less equal access to technology. Which means everyone can more or less express themselves out there. You can come across any blog, feed, video, or whatever, and the person starring in them may be telling you how and why something should be done, without having any credentials whatsoever.

Fitness blogging to name one; everyone and their mother will tell you what to eat and how to do something. But then, they have zero medical or sport education, and yet, their voice is equal to any other, as far as the Internet is concerned. True, you should not seek advice on social media about your medical problems and such - we go back to the initial claim and statement - but the fact it can be done with the same amount of technical complexity (or even less) as accessing a reputable medical journal means that almost any piece of information has equal weight. This is a frightening realization.

Popularity also becomes a problem. Something can be widely popular, but that does not make it true or useful. Again, we have fact vs. opinion, but if someone says something, three hundred or three million followers do not necessarily indicate anything about the quality and veracity of provided information. In fact, there is no correlation nor causation between the strength of information, i.e. popularity, and its factual validity.

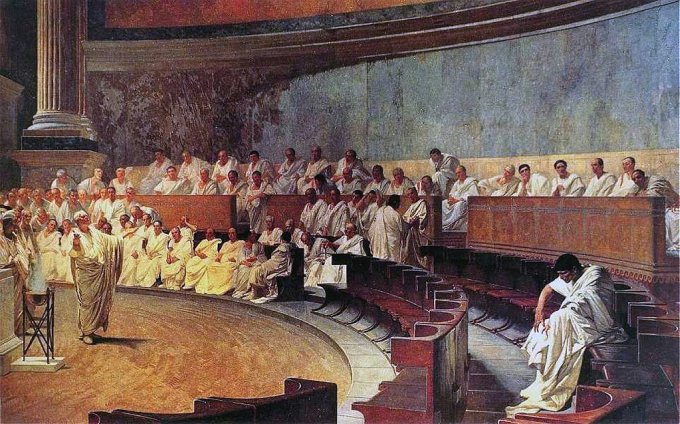

Cicero, on the left, has many online friends. Cateline, on the right, does not. Guess who won the argument?

And yet, unfortunately, the majority of people are barely able to count to 10 without getting confused, so for them, glamor, flash, bling, and sheer mass will be more than enough to confirm bias and belief, and so, you end up with he/she who shouteth the loudest winneth. The data is already skewed, and we've only just begun.

Money

This issue is exacerbated by media giants and Internet information giants - the word search is not sufficient to describe the phenomenon - as they directly profit from how the information is used, and how the space of that information use is grown. It is in the best interest of data companies to increase data use and dependency, and that means channeling people toward highest revenue streams.

This means that it's no longer the matter of fact, or even opinion - it's a financial consideration. For instance, Web page ranking and popularity. Most of the so-called SEO bullshit has NOTHING to do with content. It's all about making content appealing so that you can grow traffic, regardless of what kind of traffic you peddle. The fact you might be selling horse manure by the verbal pound makes no difference.

Page loading speed. This is another example where moronity and money take precedence over data integrity. If a web page contains more than one paragraph of text, assuming that people actually intend to read it, the rest of the page will have loaded in the background long before you've finished reading the paragraph.

It's not all about swiping left or right. You can actually READ.

Note: Image taken from Wikimedia, licensed under CC BY-SA 3.0.

And yet, companies penalize - and favor - websites based on how quickly they load information. This has nothing to do with the data itself. It's all about infrastructure. It might make some sense if we're talking some interactive bullshit full of images to make morons happy, but with actual data that needs LANGUAGE to process? Nonsense.

So we're in a situation where you have data giants controlling the data - and shaping it the way they see fit. Ordinary people are not privy to the monetary, altruistic or other considerations there might be here, they only see the end product. This means that the landscape of information that people consume is directly impacted by algorithms designed to make data companies bigger, stronger and more profitable. Data manipulation is already happening on a mass scale. Adding a few more qualifiers will not make much difference, except that it's visible now, and it carries a moral note.

Internet morality police for morons

So what we end up with is a theocratic model - a Web 2.0 religion but a religion nonetheless - where companies dictate what idiots should see when they browse about. It's not just the matter of how accessible the information is, it's also about deciding whether the information is good or bad for the masses. That's pure propaganda. Besides, what credentials do Internet nerd companies have to peddle their morality model onto the wider world population? Apart from the obvious fact that whatever the data giants do will be very US-centric and thus irrelevant to some 80% of the globe, I don't need anyone imposing their moral compass and beliefs on how I should access and use data.

If anyone should do this - it's the government of whichever country the data currently resides in, as the government has a direct responsibility for the citizens and their welfare. Again, we can talk about the concepts like nanny state, police state and whatnot, but you still have people getting services from the government, you have people paying taxes, and you actually have people with credentials and direct responsibility in between.

The last I checked, there isn't a counter called "Internet memes" in my local municipality offices. There's one that says "Electricity, so that idiots can post nonsense online and protest about how their lives are difficult in the West dot com."

Moreover, this model removes direct responsibility from people. If someone else filters information for you, then you don't feel like you need to do it yourself, right? Plus the model is horrible. And useless. We already have so-called "naughty" filters to protect "children" - it's always "think of the children" model to tickle the guilt glands among the billions of clueless cretins - but never mind the fact you can see ten executions of people or brutal murders on Youtube, Liveleak or 9gag at any given moment. In the worst case, you need to "sign in" to see the brutality so that companies can profile you and then send you advertisement and recommended videos featuring more of the same horrible nonsense. Woe the random boob, you want the violent stuff!

If companies want to "protect" people, then they should also be criminally accountable for when they fail to do so, the same way governments are. You want to filter out crap? Good. But then you will also be held accountable when someone sees something they shouldn't or don't want, and if someone downloads a movie because there was a button, then whoever put the button should be asked questions. Or fined. Or jailed.

But it can't work both ways. Either you give people EVERYTHING, and then they are in charge, or you control what they get, but then you are in charge. Zero accountability model does not work. Except as some sort of stupid morality banner under the guise of political correctness and similar dross.

We come back to the beginning

Too often, basic needs are flaunted as reasons for exercising more control over people. Elected governments in democratic societies have the right to do that. You may not like it, but that's part of the cycle. But when PRIVATE companies start playing this game, it becomes dangerous. They have no regulatory rule in people's lives, no obligations, no responsibilities, no liability. No one chose Mozilla or Google or Facebook or Baidu or any other company to be their moral compass of the day. Hi-tech companies can play their kindergarten values in their cubicles, but not out there in the real world.

It is not Mozilla's - or anyone's - duty or responsibility to decide what people should see and how they may be affected. If it's allowed BY LAW, then the Prude Brigades have no business there. Being stupid, rude, offensive, or a troll is not a criminal offense in most civilized countries, and therefore, people are legally allowed to express themselves as they see fit. If someone does not like that, it's their problem. If someone does not have the ability to analyze, judge or criticize data, again it's their problem. Should we also issue fines to people who use sarcasm or cynicism or irony in day-to-day conversations because this could make morons uncomfortable?

The real danger in this model is - if this "voluntary" censorship sticks, then next step will be profit and then "benevolent" manipulation as supposedly negative data streams that cause people unnecessary "mental burden" are removed. You end up with brainwashing.

The idea that information might not be trustworthy is THE GOLDEN TENET of intelligence and human curiosity. You CHALLENGE ideas, you doubt what you're told, you never take what you're told for granted. You compare and correlate. You test and evaluate. It's the only way to learn and grow.

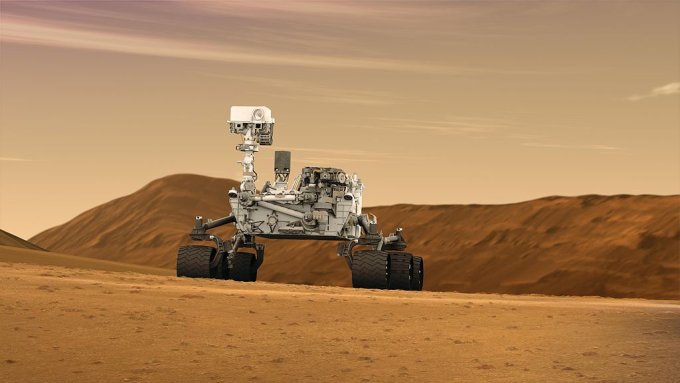

Mars Rover didn't get to where it is because people listened to Charles Duell - in fact, they did not, because he never said what people think he said. See? You double-check stuff. Amazing, right.

You do not seek validation in the masses, you do not seek comfort in sheep mentality, and you do not need to live in some bubble of isolation. This is the worst kind of stupidity that can be. Complacence bred from pure and simple ignorance.

Lastly, in the end, this is going to be a colossal failure. Because it will be a small-minded project designed to accommodate the Random College Student Exhibit A living in the San Francisco area and it will remain that, with algorithms that fire off blanks. It will fail to take into the fact that most people worldwide are bi-lingual, and that they don't speak English as their first language. That the way people think and express themselves is not the way 400 odd million native English speakers do. That people hold different cultural values. That they see things in a completely different way.

A good example that comes to mind is the whole Baby Boomer, Generation X, Millennials concept. It's ignorant by design. It's cliche. It's grossly inaccurate. It tries to put people in these cookie-cutter marketing-sloganeering boxes. It does not apply to the Russians or the Chinese or the Indians, because they did not have Baby Boomers. It does not apply to the Slovenians or the Turks, because they don't give a shit about being politically correct or hiding behind passive-aggressive racism. It does not apply in Israel, because everyone is a cynic there. And much the same way, keeping people's mental health all custhy is not relevant in Bolivia, because people there have more important things to worry about than whether some random idiot will be offended by a piece of mindless trolling online.

Conclusion

Truth initiatives are a horrible idea, because they imply there is just one truth and that it should somehow be accepted blindly. What this is going to do is make smart people even more suspicious of the nonsense the media tosses at them, and the stupid people even stupider. Maybe that's the goal?

I do not need pseudo-liberals to be my tranquility police. I do not subscribe to arbitrary values of goodness, because such a thing does not exist. The walled-garden mentality is a horrible thing, and it's been done before, throughout history, in countries, societies and regimes that do not resonate well with the so-called democratic process.

The greatest human trait is curiosity. The need to learn and challenge conventions. We got where we are by fighting the established truths, by coping with the unknown and uncertain, by not accepting the reality at face value. This is just the digital version of going to the middle ages and following the party dogma or some similar nonsense. One truth to bring them all and in the stupidity bind them. In the land of idiots where the data lies.

Cheers.