Updated: November 9, 2011

Welcome to the third installment in the Linux cool hacks series. Like the previous two, this article is all about cool things you can do with your Linux that are not well known and yet rather useful. When I say cool, this applies to laughing hard at XKCD's sudo make me a sandwich style of people rather than someone wearing Zara flipflops, although those are not mutually exclusive.

Anyhow, we've had some 17 tips so far. Let's try a few more. I will demonstrate using Ubuntu, openSUSE and CentOS, to show you that the choice of the system does not really make much difference. So please join me. Tomorrow, after having read and practiced these tricks, you will be able to impress your significant others and colleagues and there ought to be much rejoicing.

1. Show (kernel) functions in ps output

This is an interesting need. Say you have a program that is misbehaving. You do not want or cannot attach the debugger to it, as you fear you may disrupt some delicate time-race condition or possibly even crash the application. Or it may be stuck in a non-debuggable state. Or it may not have symbols or deny ptrace hooks or who knows what else. All in all, lots of geek lingo, the bottom line is, you just want to know at what stage the execution of the software is stuck, in the quickest, least intrusive way possible. ps will do.

This one specific example is even written in the man page:

ps -eo pid,tid,class,rtprio,ni,pri,psr,pcpu,stat,wchan:14,comm

And you will be able to see in the WCHAN column, the last function being used by your process. Most of the time, this will be completely meaningless, but if you have an inkling of understanding how your process ought to behave or you might be a developer, this could be useful information.

2. Nohup

Nohup is a special Linux command that lets you detach processes from their shell, allowing them to run in what you might want to refer to as the background service mode. Indeed, if you take a look at the process table (ps), you will see a lot of processes that were spawned by the system and run without a tty.

When you start a program from the command line, it will live within the shell of your terminal window, even if you background it with &. When you kill the shell, all of its children processes will die too. In a few select cases, we want to avoid this, so we need a mechanism that will detach processes from their shell. A simple method is to create a startup script and add it to /etc/init.d, but this should really be reserved to services.

So nohup will daemonize our processes - make them daemons. Sounds scary, but it's just geek lingo designed to impress girls. Anyhow, nohup is invoked against the desired binary or script. You need a full path if the binary or script are not presented in the PATH. You must also background nohup itself, so that it detached from the shell.

nohup <command> &

Nohup will redirect the output to nohup.out in the current directory. You should also make sure to use the proper redirection for the standard input, output and error to avoid hangs.

Here's an example. Notice that script.sh runs without a terminal, as denoted by ? in the sixth column. For instance, the grep command runs on the virtual terminal pts/3. Moreover, script.sh is parented by init (PID = 1). And you can also see the nohup output, which is just a silly echo in this example.

3. Fallocate

Fallocate sounds a meme, but it is a very neat command that can save you a lot of time. To prove that, let me ask you a question first. What do you do if you need to create a very large file, which cannot be sparse? You use dd and source the bit stream from /dev/zero, but this takes a long time. It's normally limited by the device speed, which is about 80MB/s for most disks. So if you need to create an 80GB file, you will need some twenty minutes to do that, in the best case. With USB connections and slower disks, this can grow to 40 minutes or longer. fallocate solves the problem by preallocating blocks instantly.

This is a relatively new command and system call in the Linux kernel, available since revision 2.6.23. All right, let us demonstrate.

First, we create a 10MB file. Nothing special. But then, to show you how powerful this command really is, we will compare with dd. While files this small could easily be written to disk cache, masking the true speed, the demonstration is powerful enough without having to use large files.

fallocate -l 10m 10mbfile

Now, the comparison. Notice the actual time differences between fallocate and dd. Even for such a tiny file, the difference is huge. fallocate is some 70 times faster in terms of system time, even though the entire operation took a fraction of the second.

Now, fallocate will remain as fast, without any regard to file size, while dd times will increase. When you have to create files that are several GB is size or much larger, you will appreciate this capability. For example, you may need to create swap files in this manner and preallocate them to partitions during the installation setup. You might not be able to wait long minutes or possibly hours for this operation to complete. Fallocate resolves the problem.

4. Debug filesystems (debugfs)

Debugfs is an interactive tool for managing EXT filesystems. Invoked from the command line, it allows you to change the mode, block size, write to the superblock, force the filesystem to execute specific commands, and more. Naturally, this kind of work means you know what you're doing and you're well aware of the potential hazards of data corruption when working against devices and their filesystems in a sort of live operation mode.

debugfs is invoked against the desired target device. By default, it will open the filesystem in read-only mode, as a precaution. This is quite useful for trying to salvage data from corrupted filesystems. Other commands that come into mind when trying to work with filesystems include tune2fs and resize2fs.

5. Blacklisting drivers

The Linux kernel comes with a ton of drivers, some compiled into the kernel, during the kernel compilation, which is done by specifying Y, some available as dynamically loadable modules, which is done by specifying M. The modules will later show under /lib/modules, matching your kernel.

Now, the kernel footprint could be big and contain too many drivers that you do not need or even contain conflicting drivers that interfere with your work. For instance, you might not want ipv6, which is something we tried in my Realtek network troubleshooting on Kubuntu Natty on my latest desktop, or perhaps you might not want the Nouveau graphics driver, as it conflicts with the Nvidia driver and prevents its installation, as we have seen in my CentOS Nvidia guide.

There are several ways you can disable drivers - by blacklisting them. Not a new thing, we've done the same back in 2006 with my Linux guide of highly useful configurations. You can make permanent changes by editing files on your system or pass parameters to the kernel commandline in the GRUB menu.

Using the CentOS example, you can disable the Nouveau driver by appending the following string to the kernel command line:

kernel /boot/vmlinuz <all kinds of options> rdblacklist=nouveau

Oncer your system boots and you are 100% confident the change works well, then you can make the change permanent, either by editing the GRUB menu or by editing the driver to the /etc/modprobe.d/blacklist or /etc/modprobe.d/blacklist.conf file, depending on your distribution.

echo "driver name" >> /etc/modprobe.d/blacklist

Please make sure you have backups before you permanently alert your system. Finally, some drivers will have writable parameters exposed under /proc and /sys, allowing you to echo new values on the fly and make changes as necessary. We will discuss that a while later.

6. Browsing the kernel stuff

This is a vague title, but what I'm referring to is the capability to quickly inspect kernel functions, check header files, determine whether your applications are trying to run code that belongs to the kernel or something else and so forth. To this end, there are many tools you can use. We'll examine a few.

First, you can go online lxr - The Linux Cross Reference site, which indexes all source code in the kernel repositories. So if you're looking some function, just input the name or part thereof into the search box and start reading.

Then, there's cscope, which we saw in the Kernel Crash Book. If you have kernel sources installed on your machine, you will be able to check what functions, text strings, symbols and definitions are declared in different source files. This is quite useful if you are trying to debug problems with your applications or perhaps even kernel crashes. To that end, you might also be interested in ctags.

7. Some extras

The tips listed below will probably not serve you that often, but it is good to know about them. Almost like hoarding water for the nuclear winter, so to speak, only more fun. Now, please note that you cannot follow the advice below at all!

It's a sort of a paradox, but unlike so many people out there, I will not give you blanket suggestions on how to utilize your machines, as every single use case is different. Saying that X will speed Y is utterly and morally wrong. One man's tweak blessing is another's curse. Do not even change configuration because someone somewhere said it ought to work, make your system work faster, be more responsive, etc. 99% of these wild and happy recommendations are valid for single home machines with no regard to reality, especially not businesses with heavily loaded production servers. Therefore, be aware of the possibilities, study them carefully and then apply your best formula.

/proc and /sys tunables

Explaining what /proc and /sys do is beyond the scope of this article by three whole quantum leaps. But they are very important pseudo-filesystems that let you tweak all kinds of things, on the fly, no reboot required.

In this section, I will try to elaborate on several useful features, like CPU affinity, memory tunables, scheduling, and a few other items that will normally earn you a good beating your neighbors if you ever speak of them in public. Let's do it.

For example, if you have a multi-processor system that does very specific tasks, you might want to bypass the internal scheduling mechanisms and force your cores to process only certain workloads. Normally, this tradeoff usually has more problems than benefits, so please don't make any changes just for the sake of being cool.

To give you a practical example, you might want to assign interrupt handling for most heavily used network channels to CPU1, while allowing the rest of the tasks to work on CPU2. Indeed, if you have a box that has several network devices and churns data like mad, loading one specific processor might be a good idea in ensuring the quality of service for other tasks. Then again, you could ruin everything, so be careful.

To get this going, you need the processor bitmask, which you can derive from the number of available processors on your box, as well as the corresponding interrupt for the channel you wish to assign to a specific processor.cat /proc/cpuinfo

cat /proc/interrupts

And then, we do the magic - force IRQ 30 (Wireless, iwlagn) to processor 1:

echo 1 > /proc/irq/30/smp_affinity

Of course, your kernel must be capable of symmetric multi-processing, which is a default in all new kernels. It's not a given for older kernels like 2.6.16 and 2.6.18 in previous but still much used enterprise editions of SUSE and RedHat.

More reading here: http://www.cs.uwaterloo.ca/~brecht/servers/apic/SMP-affinity.txt.

Memory management

Linux memory management is the blackest of magics in the world. But it's a fun thing, especially if you know what you're doing. Like I mentioned before, no one setting will work for everyone. There's no golden rule. The system defaults are as good as empirically possible for the widest range of uses, so you should stick with that.

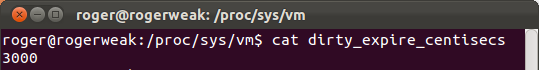

If however, you feel really adventurous, you might want to explore the kernel tunables under /proc/sys/vm. There are several of those.

The swappiness parameter tells you how aggressively your system will try to swap pages. The values range from 0 to 100. In most cases, your disk will always be the bottleneck, so it will make little difference. Then, there's the dirty_ratio tunable, which tells the percentage of total system memory that can be taken by dirty pages. Once this limit is hit, the system will start flushing data to the disk. Another parameter that is closely related to the dirty_ratio is dirty_expire_centisecs, which determines the max. age of dirty pages before they are flushed. The system will commit the dirty data based on the first of the two parameters to be met, which will most likely be the expire time.

A mental exercise: the default dirty_ratio on Linux is 40%, while the default expire tunable is set to 3000 centiseconds. A centisecond is 1/100 of a second or 10ms, so we have 30 seconds total. If you have a machine with 4GB RAM, then 1.6GB will be dedicated to dirty pages at most. Now, this means that whatever you're writing, it needs to create some 55MB of data every second to exceed this threshold in the thirty-second period for the kernel flushing thread to wake and start writing to the disk. In most cases, you will rarely have such aggressive writes. Some notable examples include large copies, video rendering and alike. In daily use, hardly ever. If you have more than 4GB RAM, say 8-16GB, then this becomes even less likely.

This exercise also tells you whether you really need that high dirty_ratio, how to set the other tunables and more. Having too many dirty pages also means very long and sustained writes when the time comes to commit them to disk. Food for thought, fellas. There's no golden rule.

As you can see, I'm breezing through these extremely lengthy and complex topics, but the idea is not to write a PhD on memory management, but give you a very brief sampling of the possibilities, so you can later explore and use them.

You can make changes by echoing values to /proc or using sysctl.

A very geeky read (direct link) for RHEL4, but still very much relevant today.

Another thing you may want to attempt is to allow/disallow memory overcommitment. Normally, Linux uses smart heuristics for managing overcommittment, but if you are really worried about how your system handles out its quiche to processes, then you can disable the overcommittment or set a ratio. I would recommend against any changes, unless you have very strict requirements, you cannot afford OOM mechanism to work, etc.

I/O scheduling

Another geeky item, best left alone. But if you must, please read on. First of all, most I/O elevator algorithms assume platter-based disks, so if you're running with SSD, the rules of the game changes, but this has been taken into account in recent kernels. Assuming you're running on plain old mechanical hardware, then you have one simple goal: as few seeks as possible to minimize access times and wear, which translate into user responsiveness latency. But then, some of your machines might be running pure computation tasks, so the responsiveness might not be an issue.

But in general, we want to perform write operations in bursts, as much data as possible. There are four available schedulers: noop - most basic, dispatches requests as they come, normally good for disks on key and systems with heavy CPU usage; anticipatory - longer delays, so there's more chance for starvation, however it tries to maximize throughput and reduce seeks; cfq - better known as completely fair queue scheduler, it relies on processes behavior and can be used with ionice to achieve balanced throughputs. It does not prefer writes or reads; deadline - this one tries to dispatch as quickly as possible, treating tasks as real-time, in order to avoid process starvation.

You can issue the change per disk:

echo <scheduler> > /sys/block/<device>/queue/scheduler

For instance:

echo cfq > /sys/block/sdb/queue/scheduler

All this sounds dandy, but the real challenge is figuring out what your machines are doing and match the behavior accordingly. After you have made the change, you will need to test your results. In the Linux world, you will most commonly find cfq or anticipatory as the default choice.

Of course, if you make changes to the scheduler, then you might also want to tweak the readahead settings, both the readahead max. value and the throughput value, as well as the number of simultaneous I/O requests. The corresponding tunables include nr_requests, read_ahead_kb and inode_readahead_blks. Some of the values will be limited by the filesystem choice. Let me disappoint you and tell you that you will have to work hard to see significant improvements.

Some reading on schedulers: Linux Journal - I/O Schedulers.

Filesystem mount options

Like the disk, we want speed. That's the basic driver here. So let's see what kind of options we can use. The most notable focus is on the journaling capabilities of modern filesystems.

This is another black magic, but something you can test with relative safety. Choose any old disk, preferably with a single partition to avoid masking results by typical disk speed bottlenecks. Then, test various mount options. Some of the notable performance boosters so to speak include:

writeback mode - only the metadata is journaled, and the data blocks are written directly to their location on the disk. This preserves the filesystem structure and avoids corruption, but data corruption can occur. For example, if the system crashes after the metadata is journaled but before the data block is written.

ordered mode - metadata journaling is done after the data is written to the disk. In this way, data and filesystem are guaranteed consistent after a recovery.

data mode - both metadata and data are journaled. This mode offers the greatest protection against file system corruption and data loss but can suffer from performance degradation, as all data is written twice (first to the journal, then to the disk).

Some more reading: Anatomy of Linux journaling filesystems.

All right, now that we know what we need, we can simply mount a filesystem with the writeback option. You should test extensively, to make sure things work out find, or at the very least, use this option for filesystems with heavy access but that might not be containing critical data.

mount -o data=writeback /dev/<device> /<mountpoint>

You might also want to consider noatime and nodiratime, but again don't listen to one geek trying to impress you with words, do your own testing and prove everyone else wrong.

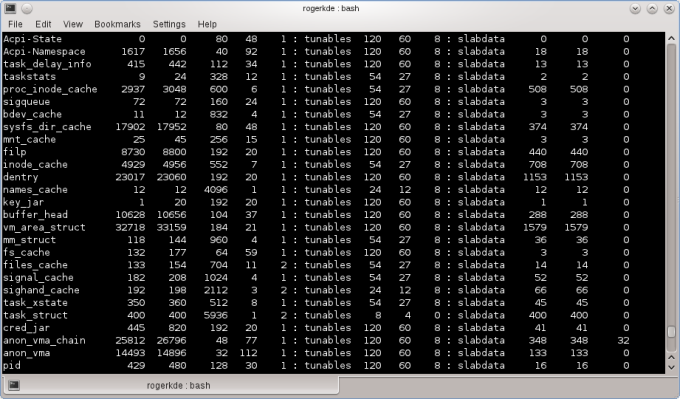

And I guess that would be enough for today. Other items that you might want to look at include slabinfo/slabtop, huge pages and Table Lookaside Buffers (TLB). That's different from LTB, which stands for Tomato Lettuce and Bacon, a different kind of hack. Some screenshots and we're done here.

Conclusion

There you go, another lovely set of geekiness. Again, the real value in these hacks is the exposure not the actual application. Be aware of the functionality, study it, and then apply it to your personal or business needs one day. And remember that no two computers and use cases are the same, so blind copy & paste will not work.

That would be all, I guess. You are also welcome to check the first and the second article, as well as the whole series of so-called super-duper admin tools. We will also have an extensive review on the Gnu Debugger (gdb) soon. Stay pretty.

Cheers.